AI in Finance Has Evolved. Most Teams Haven’t.

Lessons from putting AI into real finance operations

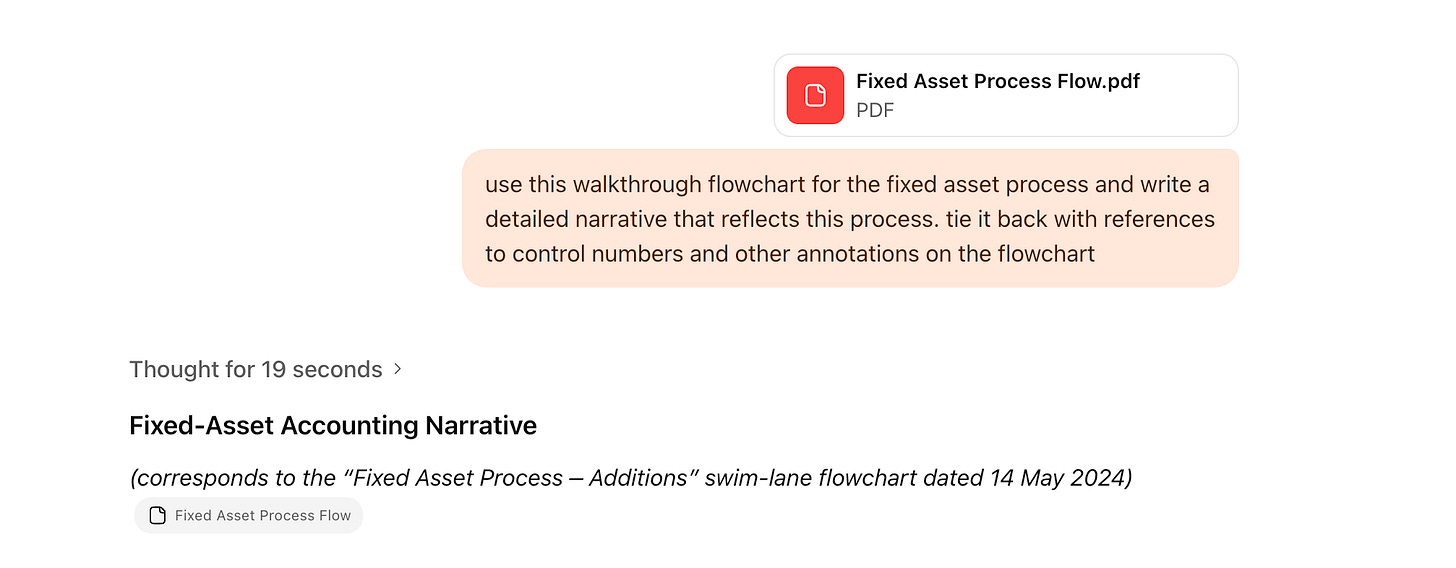

Over the past couple of years, nearly every finance team has experimented with AI in some form. For many, the first experience looked something like this: paste a document into ChatGPT, ask it to extract key fields, then copy the output into a spreadsheet or journal entry template.

That moment is genuinely impressive. The model reads the document correctly, pulls out relevant information, and produces something usable in seconds. It becomes clear very quickly that the technology itself is far more capable than most of us expected.

The problem emerges when that same workflow meets real operations.

Month-end arrives. There are hundreds or thousands of transactions. Documents are coming in through email, shared drives, portals, and uploads. At that point, the copy-and-paste approach stops being helpful. It does not scale, and it does not meaningfully reduce operational risk.

This is where many finance teams are today. They have seen what AI can do in isolation, but they have not yet figured out how to make it work consistently, reliably, and inside the systems where accounting actually happens.

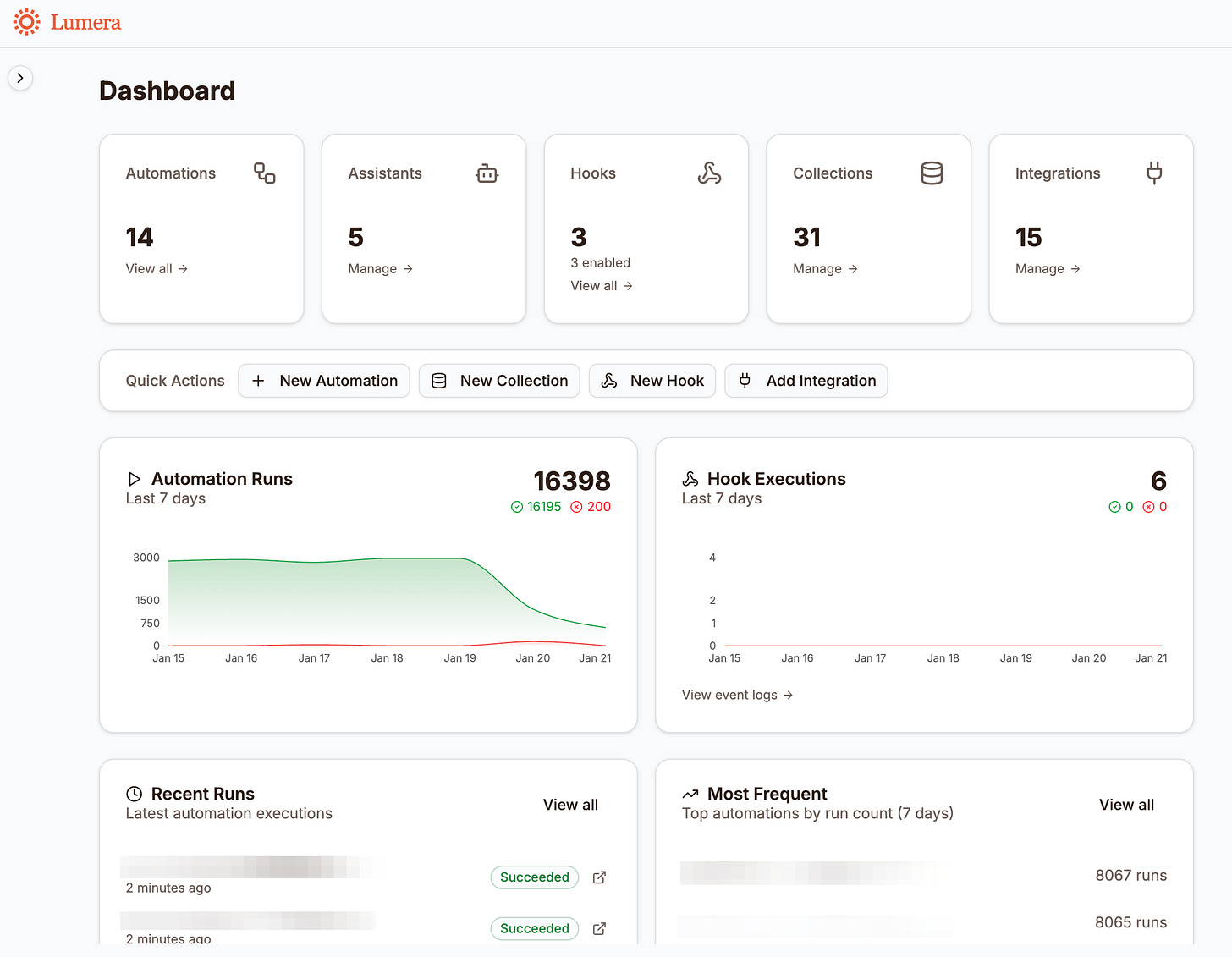

That gap is what we have been focused on closing at Lumera. Book a demo to see it in action.

The Practical Limits of Chatbots

Chatbots and prompt templates are useful tools, but it helps to be clear-eyed about what they actually provide.

At their core, they are capable assistants that wait for a human to bring them work. Someone still has to locate the document, paste it into the tool, select or write the prompt, review the output, and then move the result into a system that matters.

That may save time on an individual task, but it does not materially change the workload for teams dealing with high transaction volume and tight close timelines.

The limitation is not the intelligence of the models. Modern models can read invoices, deposit slips, and notices accurately. They can extract the right data and even reason about context. The limitation is integration.

On their own, chatbots cannot automatically pull documents from inboxes, match extracted data to bank transactions, write entries into a system of record, route exceptions for review, or maintain an audit trail that stands up to scrutiny. Those capabilities are not “nice to have” in finance. They are table stakes.

This is not a prompting problem. It is an infrastructure problem.

What AI at Scale Looks Like in Practice

When we talk about AI at scale, we are not talking about better prompts or more polished chat interfaces. We are talking about systems that do work end to end.

Consider a financial services organization we work with that manages accounting across dozens of entities. Before automation, a large team of accountants was involved in processing cash deposit transactions. Supporting documents arrived through email, shared drives, and uploads. Each document had to be found, read, interpreted, matched to a bank transaction, coded, reviewed, and manually entered into the ERP.

Today, that flow looks very different.

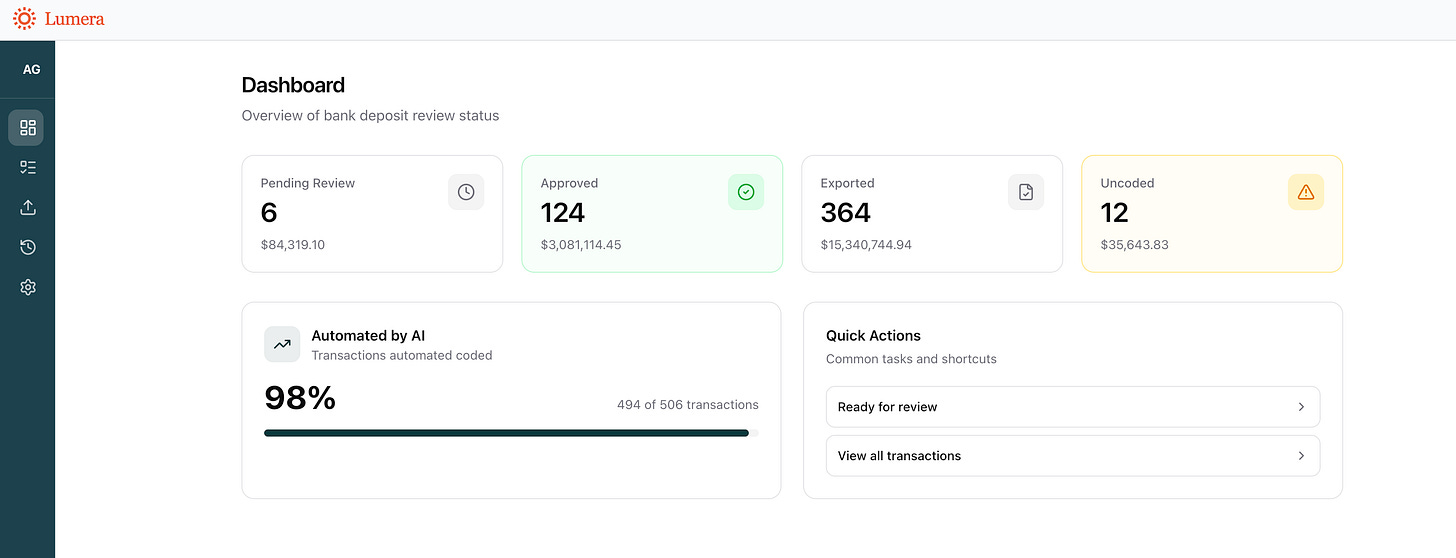

Documents are pulled automatically from wherever they arrive. There is no manual downloading, renaming, or organizing. Each document flows through OCR and an LLM pipeline without human prompting. The system extracts structured data such as transaction type, line items, amounts, and suggested coding, and it reliably distinguishes between different document types and revenue treatments.

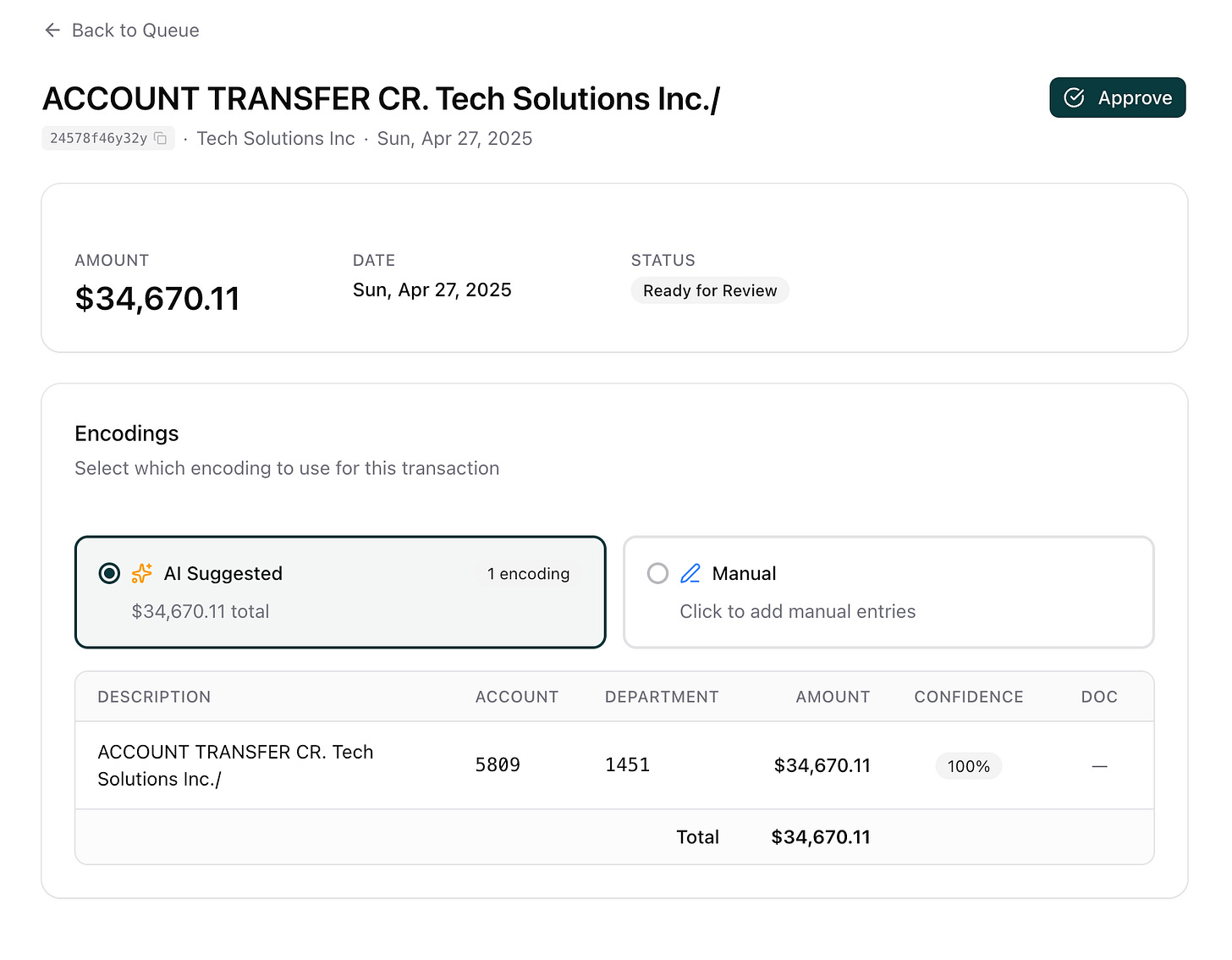

Based on that analysis, the system takes action. Deposits are matched to bank transactions. Entries are created in the system of record. Confidence scores determine which items flow through automatically and which require human review.

Accountants are no longer reading every document. They review decisions, approve or correct exceptions, and focus their time on higher-value work. Approved entries are exported directly into the accounting system with a complete, auditable trail.

The outcome is not that people were replaced. It is that the team stopped doing first-pass manual work at scale.

Moving From Assistance to Execution

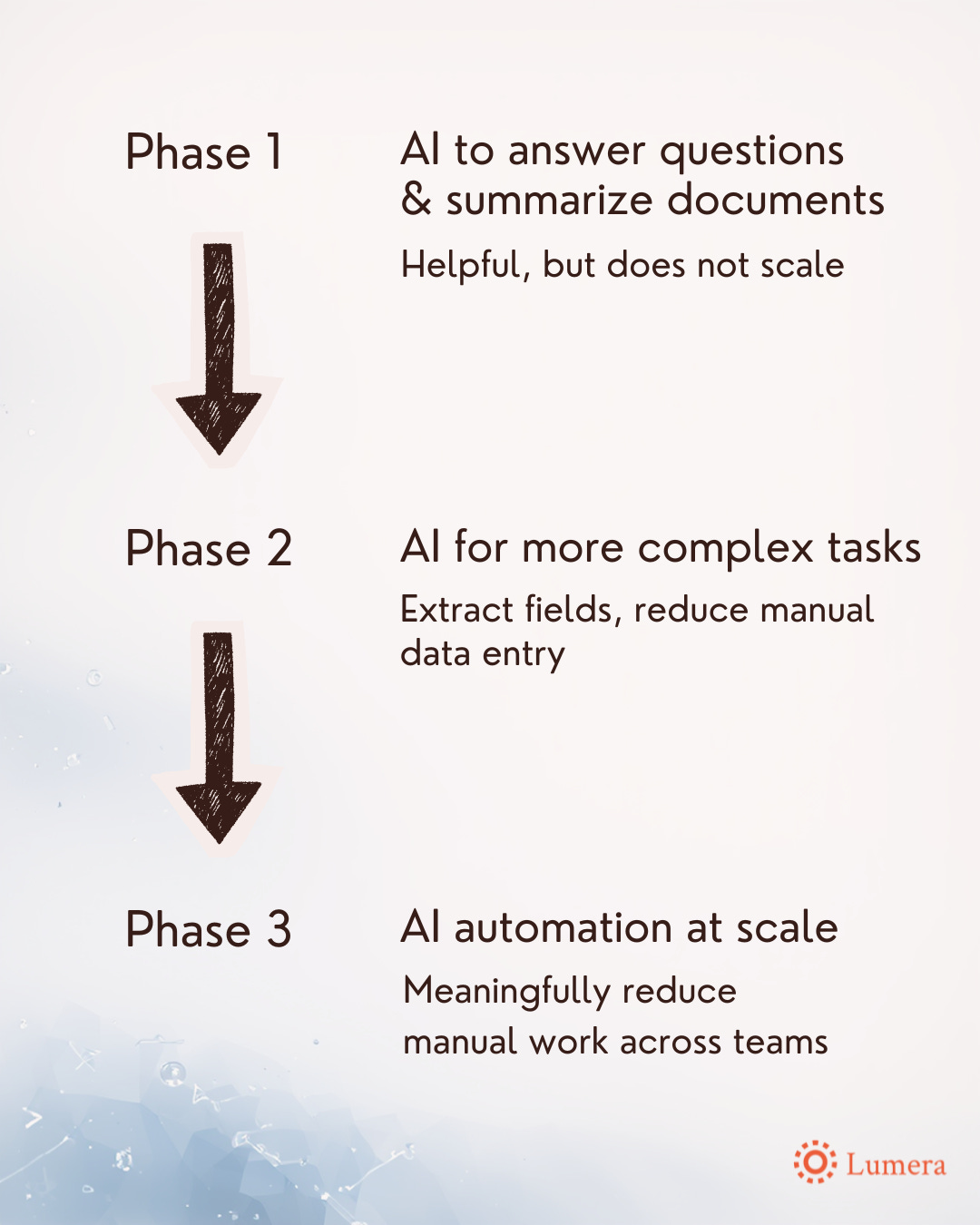

Over the last two years, a clear progression has emerged in how AI shows up in finance teams.

The first phase focused on answering questions and summarizing documents. Useful, but largely informational.

The second phase involved task-level assistance, such as extracting fields from documents. That reduced effort, but still required significant manual coordination.

The current phase is fundamentally different. AI can now ingest documents automatically, process them through reliable pipelines, take action in core systems, and surface only what truly requires human judgment.

Many teams are still operating between the first two phases. They are experimenting and learning, but they have not embedded AI deeply enough into operations to see a step change in workload.

The reason is not model maturity. The models are ready. What is missing is the connective tissue that makes AI production-grade: integrations, orchestration, monitoring, controls, and reliability.

Custom Software, Without the Old Tradeoffs

A reasonable concern at this point is whether this simply describes custom software development. Historically, building systems like this took many months and required heavy consulting support.

What has changed is the availability of purpose-built infrastructure.

Instead of rebuilding pipelines and integrations from scratch, we deploy on a platform designed specifically for AI-driven finance automation. That includes managed OCR and LLM pipelines, confidence scoring and versioning, human review workflows, bidirectional integrations with systems of record, and operational safeguards designed for finance teams.

This allows systems to be deployed in weeks rather than months, while still meeting the reliability and auditability standards finance teams expect.

AI is Ready for Reasoning and Judgment

One of the more surprising realizations for many finance leaders is that AI is no longer limited to basic data extraction.

From an unstructured document, the system can determine what the document represents, which entity or account it relates to, how it should be coded under defined rules, whether revenue should be deferred, and whether it matches a bank transaction, all while providing transparent confidence scoring.

These are judgment-based decisions. The difference now is that AI can make them consistently, and humans can focus on verification rather than first-pass analysis.

A Question for Finance Leaders

Many finance leaders have already asked their teams to explore AI. Experiments have happened. Copilots have been tested. Prompt libraries may exist.

Have you seen the operational shift that actually removes work from teams at scale?

Based on what we have seen, that shift does not come from better prompts. It comes from embedding AI directly into finance operations so that data is pulled automatically, decisions are made systematically, actions are taken in core systems, and humans are involved where judgment genuinely matters.

That is the work we are focused on.

If this resonates, the next step is not another experiment. It is seeing what execution looks like in practice.

See it in action. Book a demo to see what we can help you automate.

The gap between a helpful chatbot and a system that actually removes work at scale is where most teams get stuck. The demos look great, but month-end still hits the same way. Closing that gap takes infrastructure, not just better prompts.